January 25, 2022

How to write the scenarios for a remote user test?

In UX design carrying out a user test is always a key step in a project. Whether it is the construction or the redesign of a website or a mobile application, this action allows for the building of a better user experience. But, in order for your remote user study to be effective, it is essential to write a test protocol. This will allow you to have a realistic view of the user's needs and frustrations when analyzing their feedback. In this article, through best practices and examples, we invite you to discover the key steps to take into account to take into account when writing the tasks of your for your remote user test.

Summary:

Remote user test protocol: what you need to know

What is a test scenario?

How to break down your test steps

What is the purpose of a test protocol?

User test protocol or functional test?

Writing user test instructions: 5 key questions to ask yourself

1. How to define the test objectives?

2. Your best ally for writing user tasks? Empathy

3. Writing tasks: exploratory or specific?

4. Why limit yourself to 10 tasks in a user test?

5. How to test your scenario?

User test scenarios: our examples

Examples of test scenarios for a transport website

Examples of test scenarios for a banking website

Conclusion

Remote user test protocol: what you need to know

What is a test scenario?

When conducting remote user testing, interviews or even guerrilla testing, it is important to immerse the user in the process. To do this, you need to build what is called an scenario or test protocol. The purpose of this list of instructions is to immerse the participant in a concrete use case. This can be a product, a service, a website, or even a concept that is still in the idea stage.

Establishing instructions allows you to create the right conditions for the expression of feedback and verbatims. This first task of writing the user test scenario is crucial, as it will give a certain shape and angle to the results later on.

The scenario of a remote user test is particularly important in this methodology, because the user feedback is is done asynchronously. We send the test protocol to the users who perform the tasks autonomously and then submit their assignment. In the case of the Ferpection platform, we apply post-moderation. This is in contrast to a one-to-one interview in which the moderation is done live. It is possible to refocus the conversation if necessary.

How to break down your test steps

The right balance must be found between a protocol that is open enough for the participant to express themselves freely, but not so closed that they do not feel they are answering a quantitative questionnaire. To qualify these levels, we have directive, semi-directive, or exploratory in interview guides.

In concrete terms, in a post-moderated remote user test, the study scenario is presented in the form of a 10-step brief. This brief breaks down the experience into sections and invites the user to express his or her views on each of these stages.

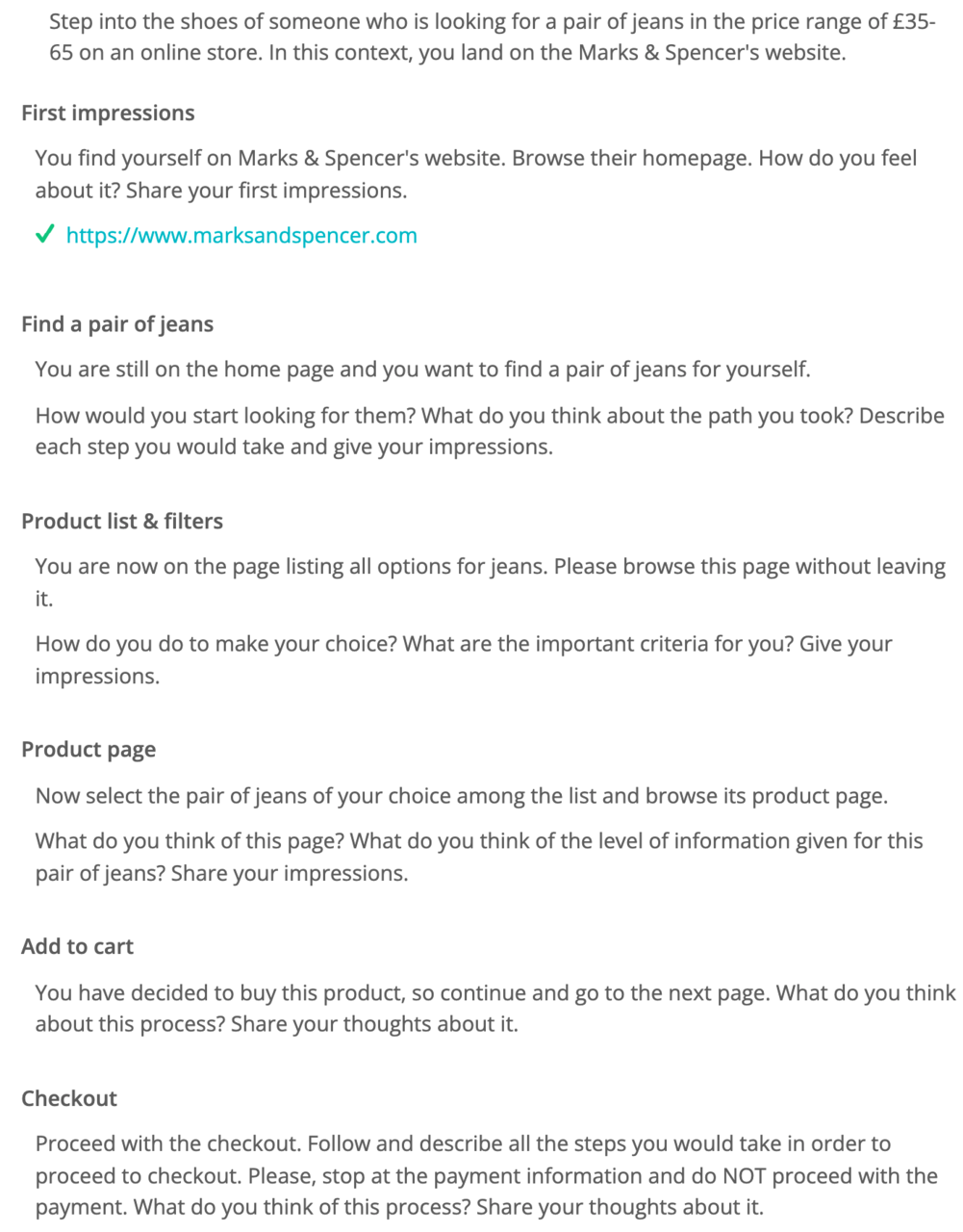

Here is an example of the protocol used for our retail benchmark in the UK, that can be duplicated on many sites. The goal here was to build a protocol covering the majority of the purchasing funnel, while remaining simple and open enough to be duplicated on the different actors tested.

What is the purpose of a test protocol?

The user test scenario is used to gather feedback from participants and to determine the strengths and weaknesses of your experience. It also helps you determine the direction you will give to your project roadmap.

Once written, your test protocol can be sent to users. Once received, the participant can then test the experience by following the instructions. For each step, they will write at least one written feedback, in which they will answer the questions raised, as well as a screenshot of the interface to illustrate their point.

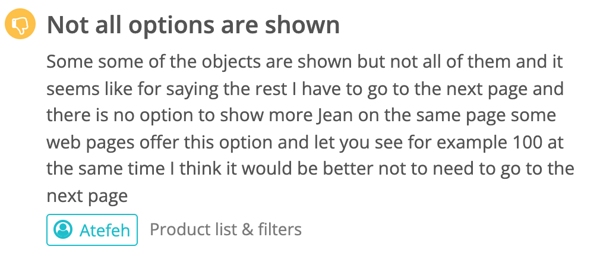

More concretely, if we take the above example of a test scenario for an e-commerce site, here is one of the user feedbacks we have collected on the "Product list & filters" step:

The idea on this step was to isolate the product grid page, and to identify if users could easily easily choose their item, both in terms of visuals, navigation or use of filters. This also allows us, once all the feedback has been collected, to to filter them in order to browse only the feedbacks that refer to this step. Hence the importance of segmenting your protocol! On the other hand, a test scenario that is too exploratory requires a lot of data processing in order to associate each feedback with a page or specific element of your experience.

User test protocol or functional test?

Be careful not to confuse user test protocol and functional test protocol! The 2 methodologies are quite different. In the first one, we push "lambda" users to take in hand the experience and give feedback on the site, its value proposition, its ergonomics, etc. In the second one, the approach is much more technical, we aim to verify the conformity of the service provided according to of the initial specifications, we try to identify bugs, latencies, technical problems with the help of automated scripts, in the lab or carried out by professional testers. In this sense, we aim to verify that that the experience works properly, not to know that it is liked by the users.

At Ferpection, we are experts in remote user testing. For functional testing needs, we advise you to turn to our colleagues at Stardust, who will be able to help you with this need.

Writing user test instructions: 5 key questions to ask yourself

1. How to define the test objectives?

What's the #1 mistake in writing a user test protocol? Trying to test everything! This is the best way to learn nothing, because the objectives of the study will be too far apart to perform consistent user research. It is therefore important to clearly define the objectives in your test scenario, in order to choose the right methodology, and then write a coherent protocol.

How do you define the objectives of the test? This depends on the stage of your project and your level of knowledge of the user experience and perceptions. The earlier you are in the project and the less information you have to formulate your research hypotheses the more exploratory they will be.

Keep in mind, if you are very early in the project and need to better define the users' needs, user interviews or a focus group will be more relevant than a remote user test.Here is some examples of objectives for a remote user test:

- Ensure usability

- Test the information architecture

- Test the perception of the design

- Validate user appetite

- Verify the experience across a large number of devices

- Prioritize the features to develop

2. Your greatest ally when it comes to writing user testing protocol? Empathy.

Always bear in mind that the objective of a test is to confront your hypotheses with the actual reality users experience and at the same time deepen your knowledge about the latter, not convince them of anything or explain anything to them. In order to generate useful feedback for your research, therefore, you need to create written tasks that serve as a framework for the test, not provide a user guide or send marketing messages.

An example of the kind of detailed instruction to avoid:

“Click the user icon to access your account. What do you think of the browsing experience?”

Imagine yourself in the place of the users participating in the tests and use real-world scenarios to engage them in a realistic experience they can actually envisage themselves going through in real life.

An example of an engaging instruction:

“Search for a flight to New York for tomorrow. Describe the steps taken and what the experience was like."

3. Writing tasks: exploratory or specific?

As stated in the introduction, the written tasks you create need to match your objectives. It is these very objectives, therefore, that will enable you to orient your tasks, which can be either exploratory or specific in nature.

When you only have a small amount of information about how your interface is being used, it can be useful and interesting to create exploratory-type tasks that will enable you to collect a highly diverse range of feedback from the test and develop a better understanding of your users' experience.

An example of an exploratory instruction:

“Go to the home page and tell us your first impressions.”

When you are very familiar with your users' experience and the various potential problems that can occur within that experience, on the other hand, you will then create more specific kinds of written tasks designed to collect feedback about these particular scenarios. Always try to structure these instructions in such a way that they set an objective rather than provide an explanation.

An example of a specific type of instruction:

“Last night, you began watching Frankenstein, but you're not interested in watching the rest of it. How do you go about stopping the film appearing on your home page? Describe the steps you take.”

4. Why limit yourself to 10 tasks in a user test?

Users' attention spans only extend so far, and this has a direct impact on the quality of the feedback collected.

Though there is no magic number where the task count is concerned – as it also depends on how difficult the tasks are – after carrying out hundreds of remote user tests, we have come to the conclusion that to avoid negatively impacting the quality of the feedback obtained, the number of stages involved should be limited to a maximum of 10.

5. How to test your scenario?

A remote user-test protocol cannot be modified once it's been launched. You, therefore, need to verify its ability to provide you with meaningful and relevant feedback.

To do this, we recommend testing your scenario with someone from outside the project who has never seen the interface and will need to follow the written instructions in order to write their verbatim account of the experience.

This will enable you to expose any incoherences in your protocol and the interface being tested before launching the test with a larger sample of testers.

User test scenarios: our examples

In order to help you write your user test protocols and to facilitate the execution of the tasks by the participants, here are two examples of test scenarios, written by our UX research consultants. These complete scenarios are made up of an introduction, followed by the following steps:

- The name of the numbered step. This is used to help you to find your way through the protocol.

- The test instruction instruction itself.

Following these different steps, it is possible to ask a certain number of post-test questions specific to the user study or on the basis of a standardized UX questionnaire such as SUS or Attrakdiff.

Examples of test scenarios for a transport website

You are planning to go on a trip to France. Go to the X website to organize your trip and buy your ticket.

- First impressions — Go to the home page home page. What are your first impressions?

- Search — Without clicking, how would you would you do on this page to search for a trip?

- Trip search — You want to go on a trip alone and on the date of your choice, to discover the closest large city near you (50,000+ inhabitants). Describe your trip and comment on your experience.

- Search results — You are on the results page of your search. Give your opinion and feedback on the information available to you.

- Trip choice — Select the trip that suits you and validate. Why did you select this one? What do you think of the route? Give your feelings.

- Travel information — Once on the page of your trip, give your opinion and your feeling on the available information.

- Confirmation navigation— Confirm your choice and go to the payment page (do not proceed to payment). Describe your journey and your feelings.

Examples of test scenarios for a banking website

Put yourself in the shoes of someone looking for a savings solution. You want to find out about the different offers from Bank X.

- First impressions — Go to the home page of the Bank X website. What are your first impressions of this page? Give your feelings.

- Search savings information — You want to learn about savings solutions. Naturally, where would you would you click to find this information? Describe your and give your feelings.

- Savings solutions page — You are on the savings solutions page. Browse through it and give your impressions of the information and features available to you. What do you think about the different savings solutions?

- Compare offers — You want to compare the different solutions. How do you go about it? What information do you think is essential to compare?

- Livret A offer — You want to know about the Livret A offer. How do you go about it? What do you think about the presentation of this offer and its information?

- More information — You are on the Livret A offer page. Would you be ready to subscribe to the offer from this bank? Why or why not? Which elements seem convincing to you? Which ones would make you hesitate?

If you are interested in this field do not hesitate to consult our benchmark study on the banking sector.

In conclusion

When it comes to creating a written user test protocol that will enable you to both learn as much as possible from the user experience and meet your objectives, the following three points are the most important to bear in mind:

- Define precise study objectives based on the stage the project is at and the challenges it involves.

- Be empathetic when creating the written instructions and make sure you engage the user in a realistic experience.

- Test the protocol beforehand to avoid any incoherences

Want to know more about the different user research methods available to better understand your users and test your products and services? Go to our methodologies and solutions solutions page.

If you liked this article don't hesitate to share it on social networks, and to subscribe to our to receive our newsletter!

All articles from the category: User research user testing | RSS

.png)

.png)